digiKam 7.0.0 is released

Dear digiKam fans and users,

Just in time to get you into the holiday spirit, we are now proud to release digiKam 7.0.0 final release today. This version is a result of a long development that started one year ago and in which we have introduced new features and plenty of fixes. Check out some of the highlights listed below and discover all the changes in detail.

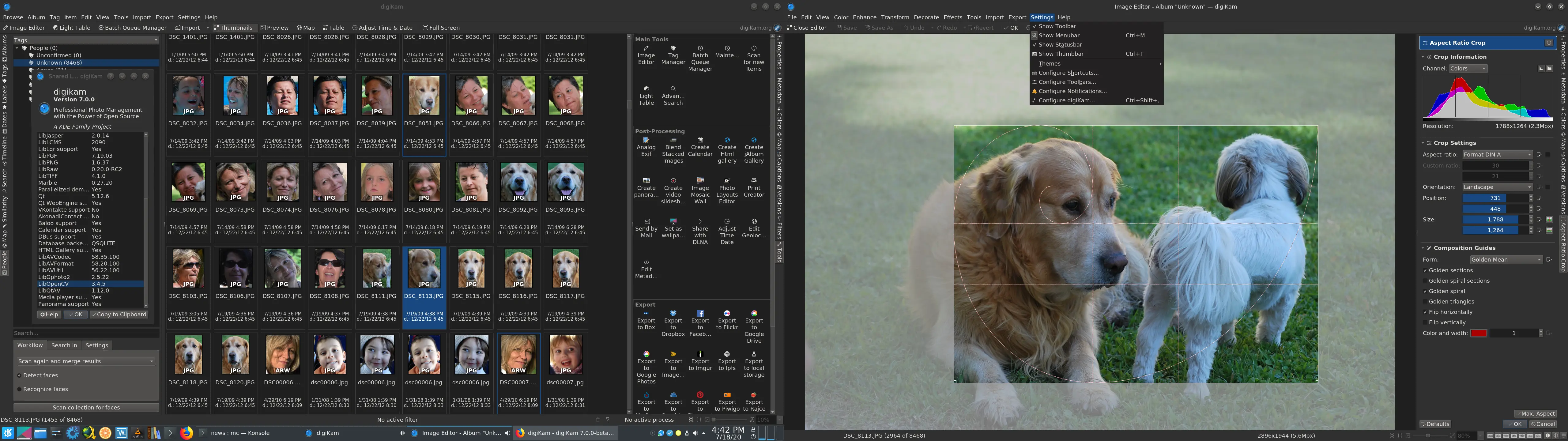

Deep-Learning Powered Faces Management

For many years, digiKam has provided an important feature dedicated to detecting and recognizing faces in photos. The algorithms used in the background (not based on deep learning) were old and had been unchanged since the first revision that included this feature (digiKam 2.0.0). It had the problem of not being powerful enough to facilitate the faces-management workflow automatically.

Until now, the complex methodologies that analyzed image contents to isolate and tag people’s faces used the classical feature-based Cascade Classifier from the OpenCV library. This works, but does not provide a high level of positive results. Face detection is able to give 80% of good results, while analysis is not too bad but requires a lot of user feedback to confirm whether or not what it has detected is really a face. Also, according to user feedback from bugzilla, Face Recognition does not provides a good experience when it comes to an auto-tag mechanism for people.

During the summer of 2017 we mentored a student, Yingjie Liu, who worked on the integration of Neural Networks into the Face Management pipeline based on the Dlib library. The result was mostly demonstrative and very experimental, with poor computation speed results. We saw this as a technical proof of concept, but not usable in production. The approach to resolve the problem took a wrong turn and that is why the deep learning option in Face Management was never activated for users.

We tried again this year, and a complete rewrite of the code was successfully completed by a new student named Thanh Trung Dinh.

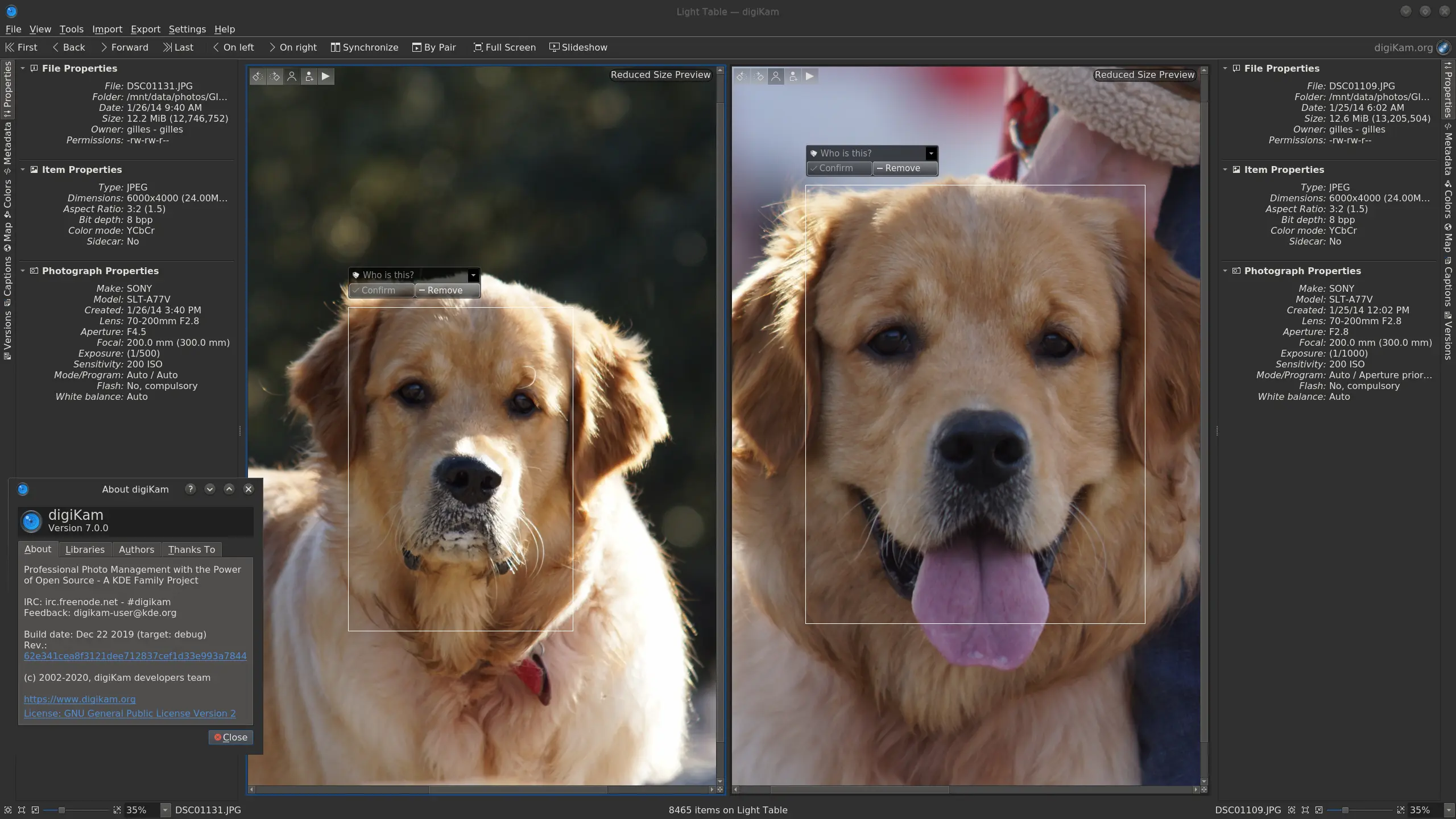

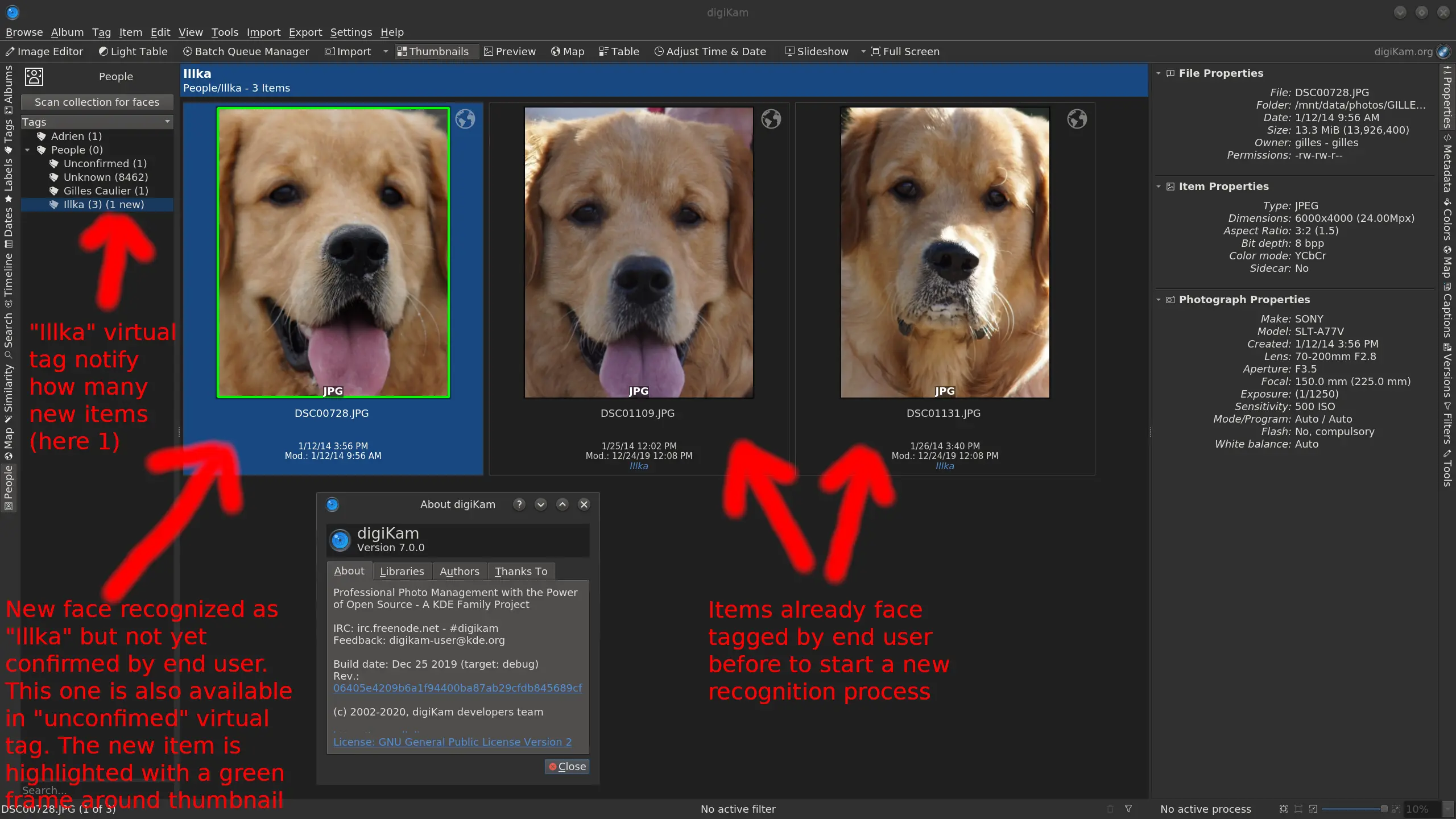

The goal of this project was to leave behind all the old ideas and port the detection and the recognition engines to more modern deep-learning approaches. The new code, based on recent Deep Neural Network features from the OpenCV library, uses neuronal networks with pre-learned data models dedicated to Face Management. No learning stage is required to perform face detection and recognition. We have saved coding time, run-time speed, and improved the success rate which reaches 97% of true positives. Another advantage is that it is able to detect non-human faces, such as those of dogs, as you can see in this screenshot.

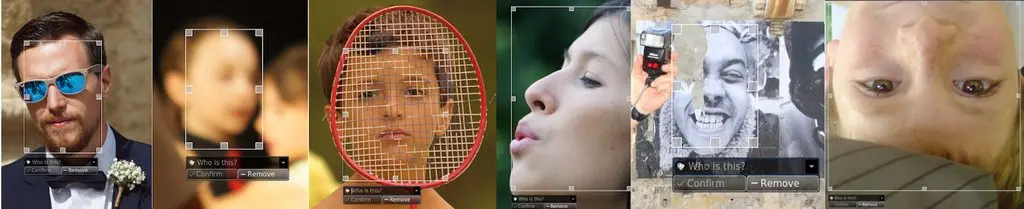

But there are more improvements to face detection. The neural network model that we use is really a good one as it can detect blurred faces, covered faces, profiles of faces, printed faces, faces turned away, partial faces, etc. The results processed over huge collections give excellent results with a low level of false positives. See examples below of face detection challenges performed by the neural network.

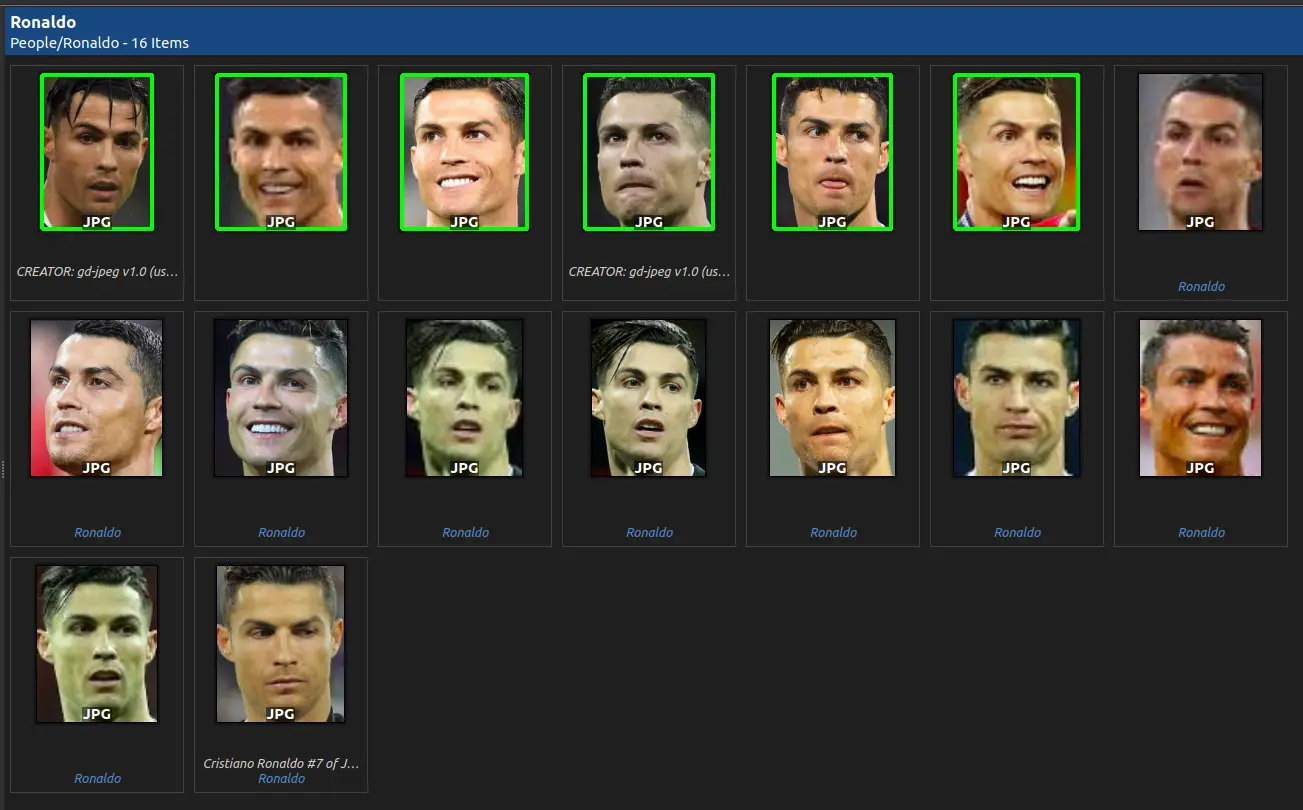

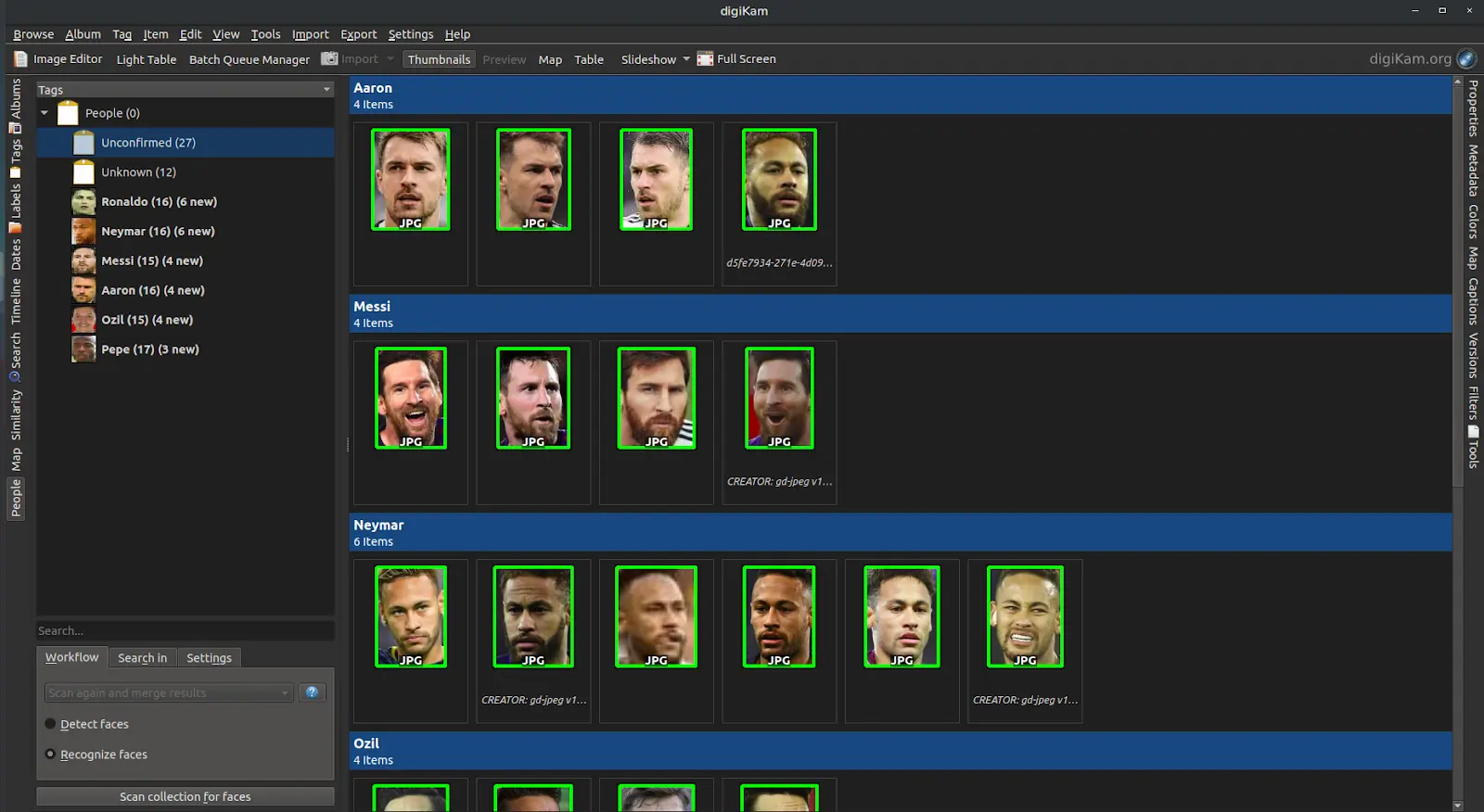

The recognition workflow is still the same as in previous versions but it includes quite a few improvements. You need to teach the neural network with some faces so that it automatically recognizes them in a collection. The user must tag some images with the same person and run the recognition process. The neural network will parse the faces already detected as unknown and compare them to ones already tagged. If new items are recognized, the automatic workflow will highlight new faces with a green border around a thumbnail and will report how many new items are registered in the face-tag. See the screenshot below taken while running the face recognition process.

Recognition can start to work with just one face tagged, where at least 6 items were necessary to obtain results with the previous algorithms. But of course, if more than one face is already tagged, recognition is more likely to return good results. The true positive recognition rate with deep learning is really excellent and increases to 95%, where older algorithms were not able to reach 75% in the best of cases. Recognition also includes a Sensitivity/Specificity settings to tune the results’ accuracy, but we advise you leave the default settings as you begin experimenting with this feature with your own collection.

The performance is better than with previous versions, as the implementation supports multiple cores to speed up computations. We have also worked hard to fix serious and complex memory leaks in the face management pipeline. This hack took many months to complete, as the errors were very difficult to reproduce. You can read the long story from this bugzilla entry. Resolving this issue allowed us to close a long list of older reports related to Face Management.

To complete his project, Thanh Trung Dinh presented the new deep learning faces management at Akademy 2019 held in September in Milan. The talk was recorded and is available here.

Although Thanh’s project is complete, the whole story is not and the second stage of rewriting the Face Management workflow is an ongoing process with two new students working on it this summer.

Faces Management Improvement Projects In Progress While This Summer

Faces Workflow Improvements

The first project, managed by Kartik Ramesh, must completely fix all majors bugs in the graphical interface and improve usability to tag and manage faces. The following changes will be brought to the Face Management Workflow:

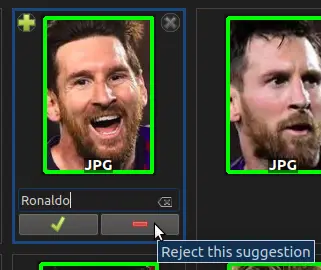

- Rejection of Face Suggestions: the Red Minus Button rejects the Face Suggestion. This is how the user indicates that the suggestion is a face, but not the one that the algorithm guessed. It moves the face back to Unknown.

Ignoring Faces: digiKam will often detect faces that the user doesn’t wish to be recognized. With this new feature, the user can tell the algorithm to ignore such faces by using the Reject Button on Unknown Faces. Faces marked as “Ignored” will not be considered during the recognition process.

Providing a Help Box for Face Workflow: in order to allow new users to comfortably use the Face Management Workflow, a help box has been provided with the necessary information. This may be accessed by clicking the question mark icon in the Face Settings panel in the People sidebar

People Names sorted by Importance: during the face recognition process, the algorithm suggests names for various faces. These names require the user’s attention in order to confirm or reject these suggestions. Instead of making the user search for the name tags, the Important tags are now pinned to the top of the People sidebar, and highlighted in bold.

Automatic Assignment of Face Tag Icons: in an effort to make the face workflow more visual, automatic icon assignment has been added to face tags. The icon chosen to represent a particular person is the first face that gets confirmed by the User. The user is still allowed to change the icons if desired. You can see a screenshot below displaying tag icons and the Sorted sidebar.

- Sorting the Face View by Unconfirmed Faces: in order to prevent face suggestions appearing mixed with already confirmed faces, a new sorting role has been provided which will sort images based on the number of unconfirmed faces in each. This leads to face suggestions appearing collectively at the start, followed by already confirmed Faces. This feature can be accessed through View -> Sort Items -> Sort By Faces.

- Grouping Faces by Similarity: the results of the face recognition process are now grouped together based on the similarity between various Faces. This allows the user to easily select multiple faces and confirm or reject them simultaneously.

Neural Network Improvements

The second project, managed by Minh Nghia Duong, is to improve the neural network engine used by face recognition. The following changes will be brought to the Face Recognition Algorithm:

After processing performance tests with DNN face clustering algorithm with wrong time latency results, we decided to use the DNN classifier method instead.

New Face Classifiers: in order to improve the processing time and the accuracy of the facial recognition module of the faces engine, new face classifiers are implemented and tested. Support for the vector machine classifier brings 80% accuracy and a speed of 82 ms/face. This model is reloaded and retrained every time new faces are added to the faces engine.

We have tested the K-Nearest-neighbor classifier which brings 84% accuracy and a speed of 100 ms/face. This model is managed through a KD-Tree structure, stored in machine memory. The storage space for a face is reduced to 1.5 Kb.

New Face Embedding Database: the faces engine now supports face classifier operations in both RAM and database. Depending on the user’s configuration, facial recognition can be performed rapidly in a machine’s memory with a storage space of about 1.5Kb per face, or it can be performed without memory occupation, by a K-Nearest search directly on the database. We upgrade the database schema to store face recognition data according to the new face classifiers algorithm.

As you can see, work is advancing very well and we expect to publish new code later this summer, probably for digiKam 7.2.0 when all implementations will be tested and ready for production.

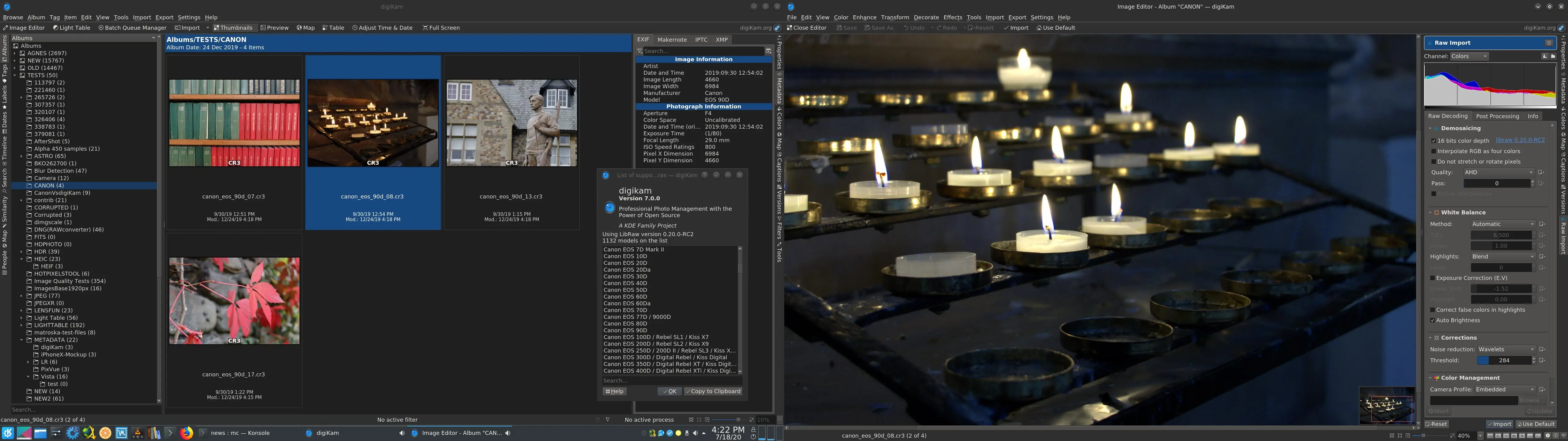

New RAW files Support Including the Famous Canon CR3, Sony A7R4, and more…

digiKam tries to support as many digital cameras’ file formats as possible. Support for RAW files is a big challenge. Some applications have been especially created only to support RAW files from specific cameras, as this kind of support is complex, long, and hard to maintain over time.

RAW files are not like JPEG images. Nothing is standardized, and camera makers are free to change everything inside these digital containers without ever documenting it. RAW files allow camera makers to re-invent the wheel and implement hidden features, to cache metadata, and to require a powerful computer to process the data.

When you buy an expensive camera, you would expect the image provided to be seriously pre-processed by the camera firmware and ready to use immediately. This is true for JPEG, but not RAW files. Even though JPEG is not perfect, it’s a well standardized format and also well documented. For Raw, for each new camera release, the format can change, as it depends on the camera’s sensor data that is not necessarily processed by the camera’s firmware. This requires an intensive reverse-engineering that the digiKam team cannot always support well. This is why we use the powerful Libraw library to post-process the RAW files on the computer. This library includes complex algorithms to support all kinds of different RAW file formats.

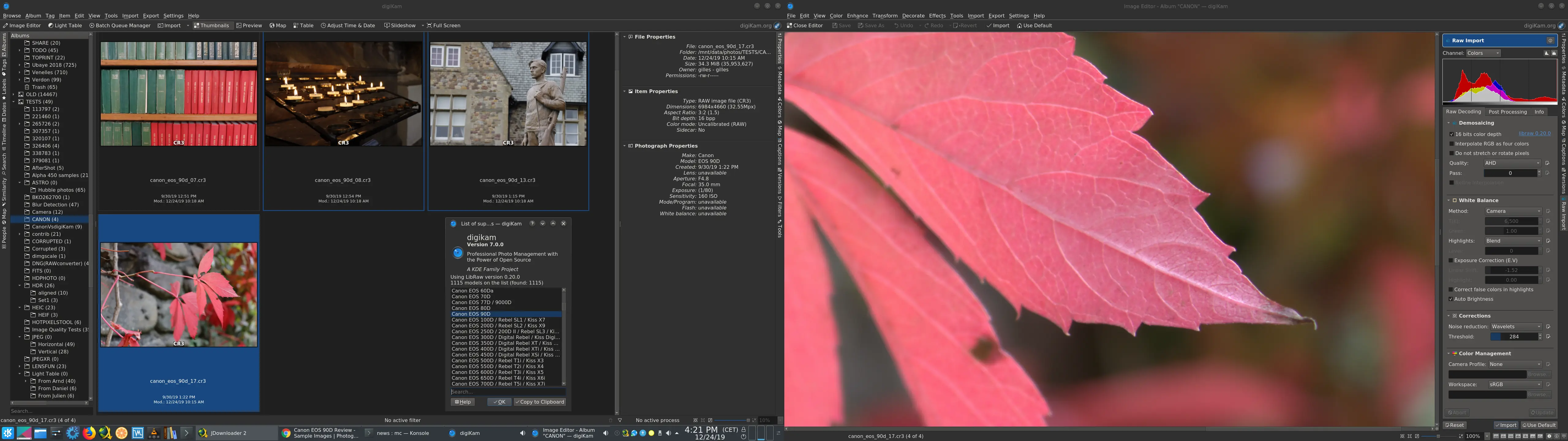

In version 7.0.0, we use the new version of libraw, 0.20, which introduces more than 40 new RAW formats, especially the most recent camera models available on the market. The list includes the new Canon CR3 format and the Sony A7R4 (61 Mpx!). See the list below for details:

- Canon: PowerShot G5 X Mark II, G7 X Mark III, SX70 HS, EOS R, EOS RP, EOS 90D, EOS 250D, EOS M6 Mark II, EOS M50, EOS M200

- DJI Mavic Air, Osmo Action

- FujiFilm GFX 100, X-A7, X-Pro3

- GoPro Fusion, HERO5, HERO6, HERO7

- Hasselblad L1D-20c, X1D II 50C

- Leica D-LUX7, Q-P, Q2, V-LUX5, C-Lux / CAM-DC25

- Olympus TG-6, E-M5 Mark III.

- Panasonic DC-FZ1000 II, DC-G90, DC-S1, DC-S1R, DC-TZ95

- PhaseOne IQ4 150MP

- Ricoh GR III

- Sony A7R IV, ILCE-6100, ILCE-6600, RX0 II, RX100 VII

- Zenit M

- and multiple smartphones…

This Libraw version is able to process in total more than 1100 RAW formats. You can find the complete list in digiKam and Showfoto through the Help/Supported RAW Camera dialog. We would like to thank the Libraw team for sharing and maintaining this wonderful library.

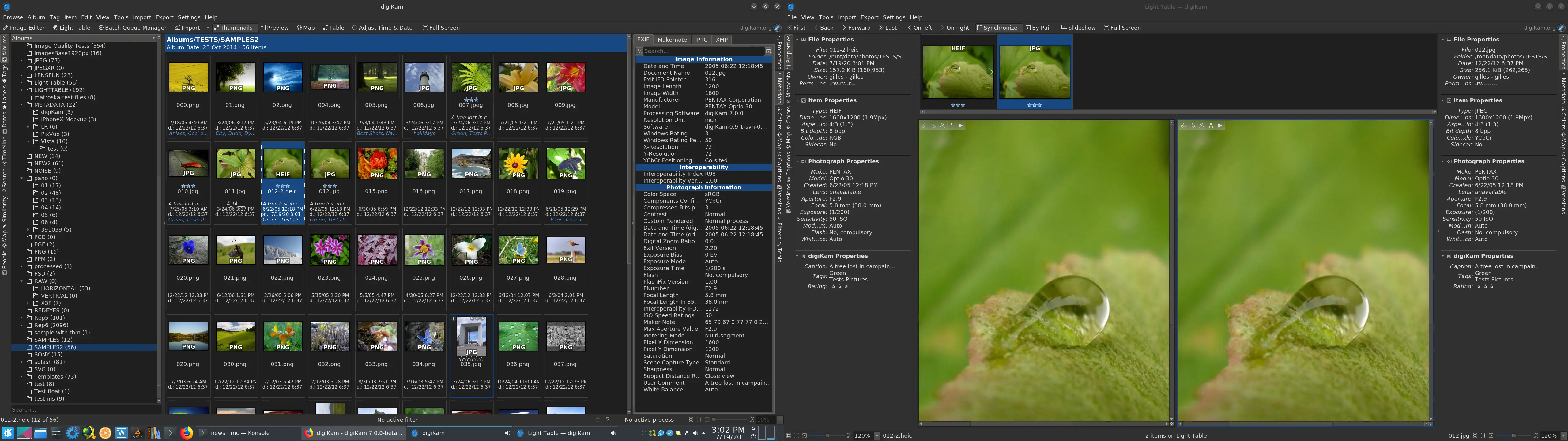

HEIF Image Format Support Improved

With the 6.4.0 release, we started supporting a new image format: HEIF. This container is used by Apple to store photos on iOS devices and also by Canon to store HDR images with the 1DX Mark III camera.

HEIF is a file format for individual images and image sequences. It was developed by the Moving Picture Experts Group (MPEG). The MPEG group claims that twice as much information can be stored in a HEIF image as in a JPEG image of the same size, resulting in a better quality image. HEIF also supports animation and is capable of storing more information than an animated GIF at a fraction of the size.

Compression in HEIF is delegated to an extra codec and currently x265 is supported. This codec gives excellent results when encoding images with small size without losing information. Metadata, preview, and color management are also supported.

HEIF can also support HDR if an extra codec is compiled with a pixel color depth higher than 8 bits. In this case, digiKam can store and edit an image without losing quality, since we have supported HDR for a while.

Another important point, besides being able to decode or encode HEIF image contents, is to populate the database with the main shot information captured from camera. The goal is to be able to use some technical criteria in search a engine later to find items in huge collections. With this new digiKam version, we fully support Exif, Iptc, and Xmp metadata extraction from HEIF, using the libheif shared library. HEIF Metadata changes are currently supported through XMP sidecar, as no write support is yet available.

The plan for the future is to support complex HEIF structures as image sequences and derivations. libheif has also introduced recently AVIF image format support, another photo container, so digiKam will also inherit this feature in the next releases.

Binary Bundle Improvements With FlatPak support

With this new release we worked a lot on all implementations to support the new Qt framework versions. Qt 5.15 is now full supported and code will be mostly ready to compile fine with the next Qt 6 level planned at end of the year.

All binary bundles have switched to the latest Qt 5.14.2 LTS. Under Linux and macOS, we use QtWebEngine instead QtWebKit to display web content, such as cloud web service login pages. All bundles have switched to the latest KF5 5.70.0. This main upgrade includes plenty of fixes from KDE frameworks, particularly an old fix to support Gimp XCF file format >= 2.10.

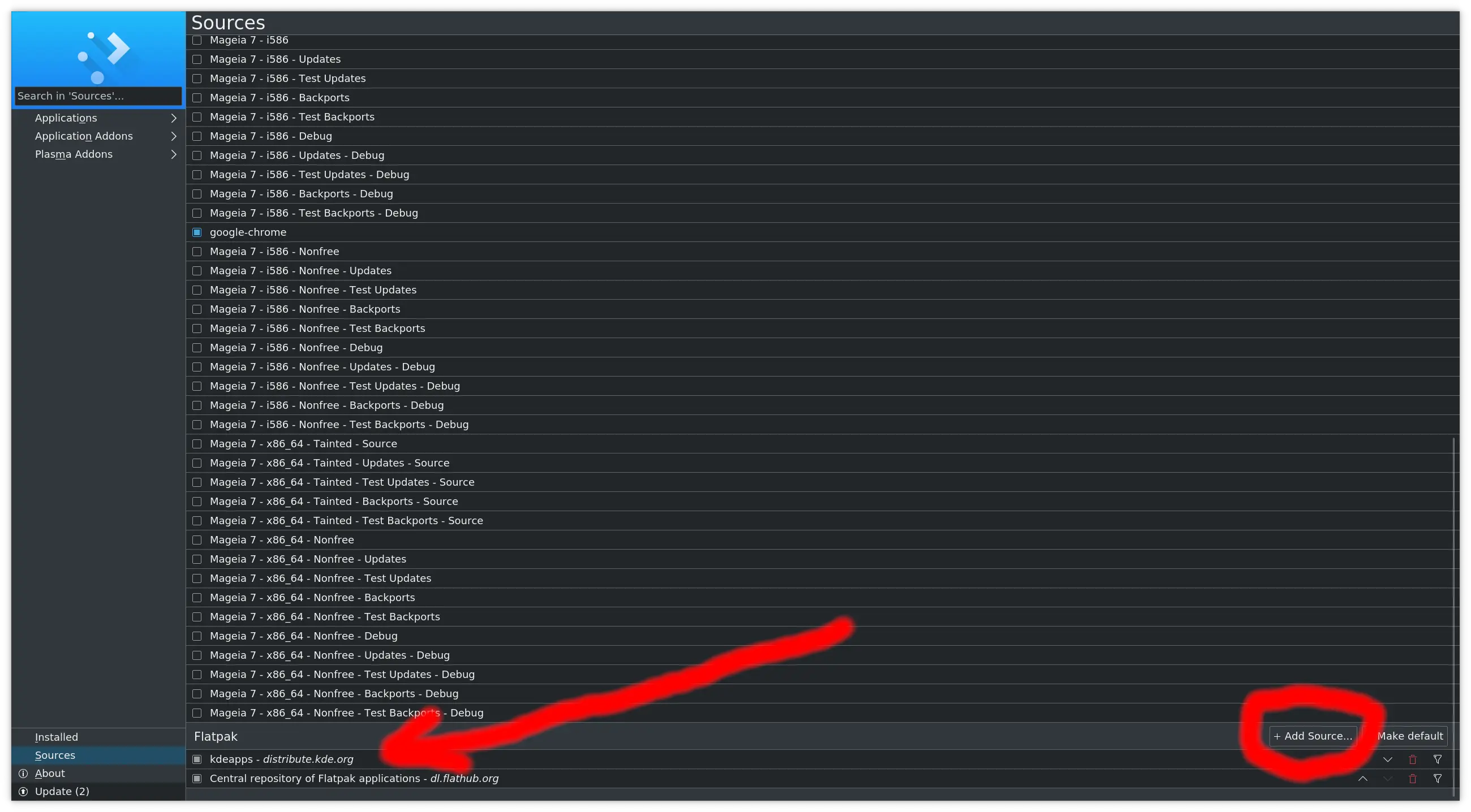

We now officially support two FlatPak Linux bundles. One is built nightly from the latest git source by the KDE continuous integration workflow. This lets anyone quickly check the latest changes applied by developers. Another Flatpak is built from the official stable release and is available through the FlatHub hosting service.

You can install the digiKam Flatpak bundle using a Linux desktop installer such as Plasma’s Discover or Gnome Software manager.

Finally, we added Microsoft Visual C++ support through a dedicated Continuous Integration workflow to compile all code with this compiler. The goal is to publish later an official release of digiKam in the Microsoft Windows Store.

Of course we continue to support Linux AppImage 64 and 32 bits, Windows installer 64 and 32 bits, and macOS Package, as with previous digiKam releases.

Application New Features and Improvements

An unsorted list of features and improvements included in this release:

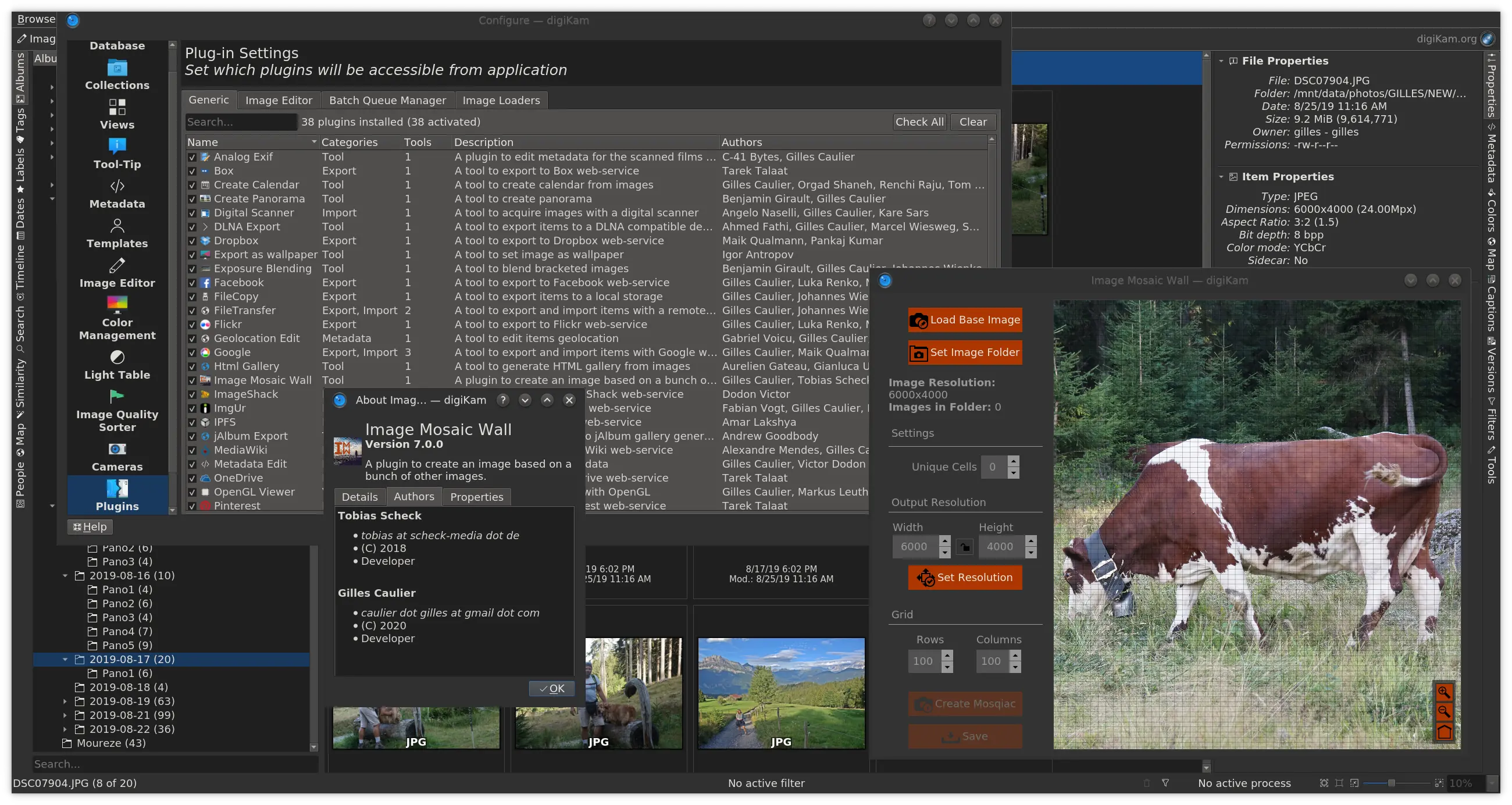

- A new tool ImageMosaicWall has been introduced as a 3rd party plugin to create an image based on a bunch of other photos. This tool is included in all binary bundles.

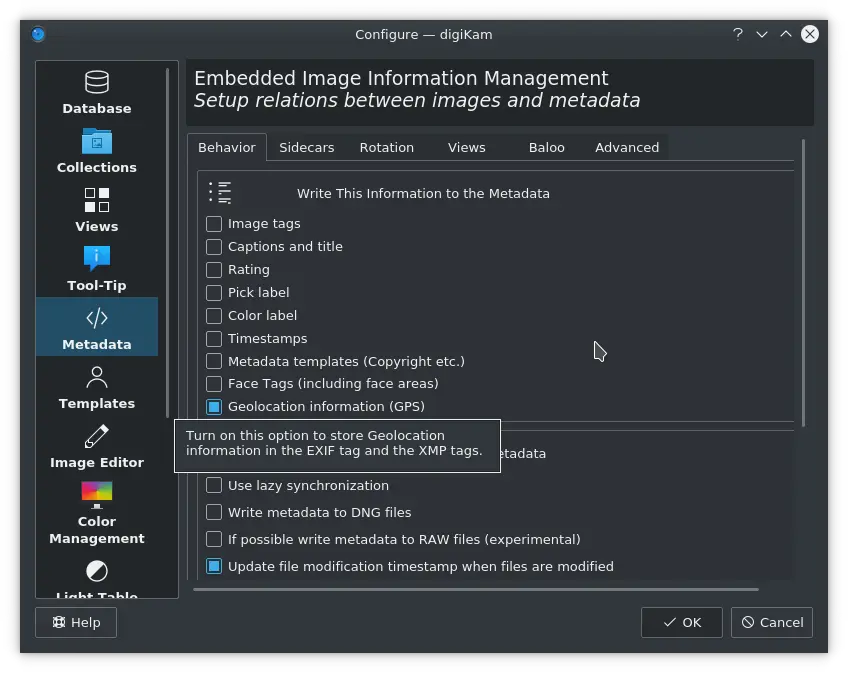

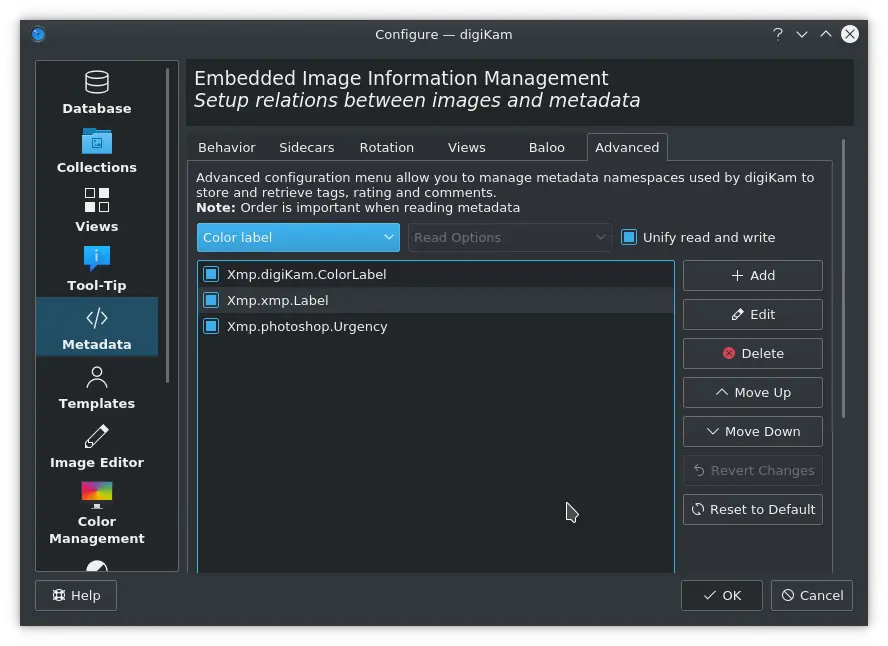

- Regarding metadata management support, we have added new options to write geolocation information into the file’s metadata. Also the Metadata Advanced Settings panel can manage the place from where to retrieve or to store color labels information.

We have improved the Windows port with the support for Universal Naming Convention of network paths and the Unicode encoding paths based on UTF-16, which are different than on the Linux operating systems.

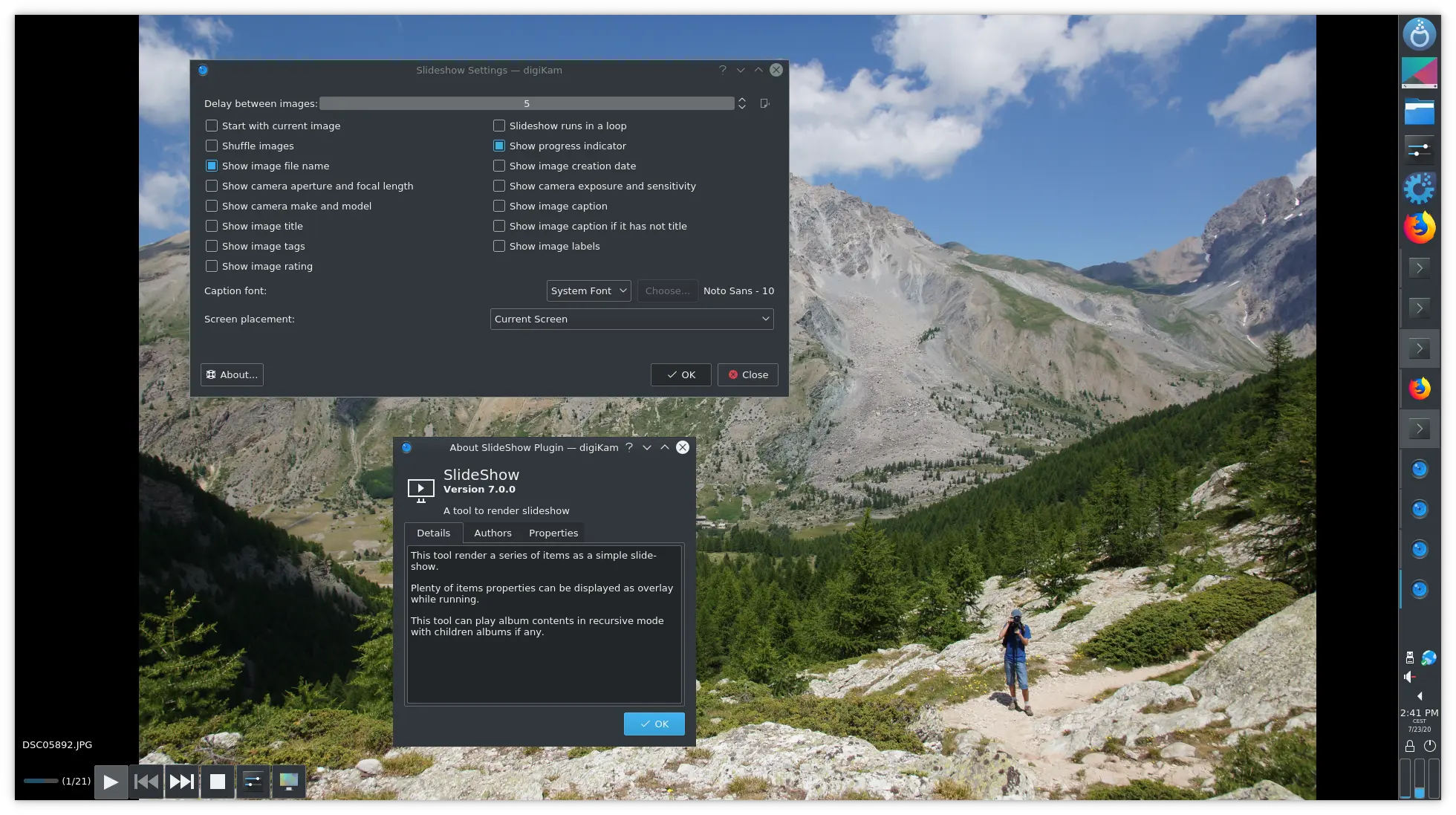

The SlideShow tool is now ported as a plugin for digiKam and Showfoto and we introduce a new settings to play images in shuffle mode. The SlideShow tool settings has moved from the application config panel to a dialog hosted by the plugin. This let’s you change settings on the fly while the tool is working.

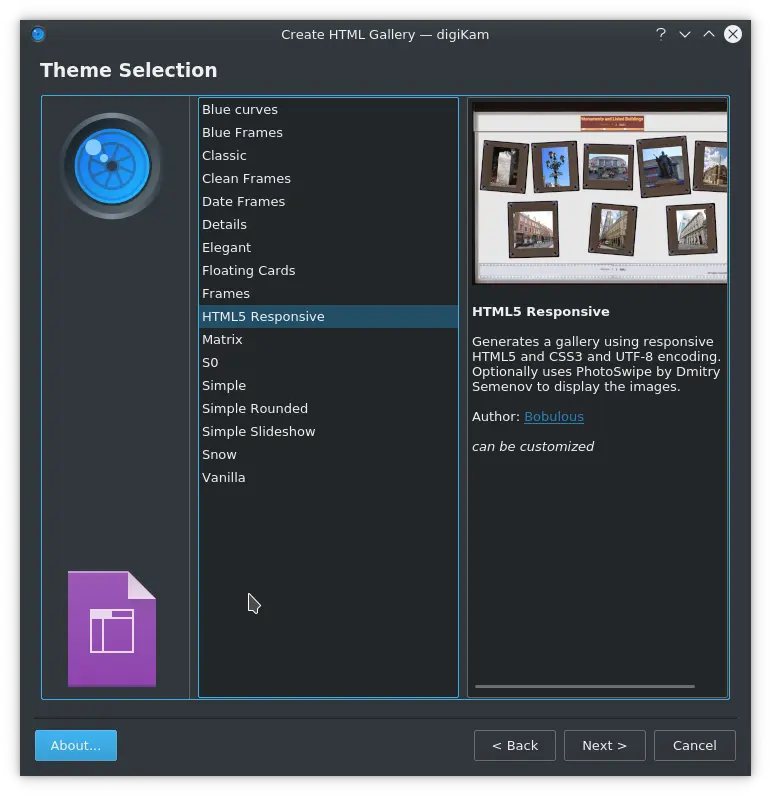

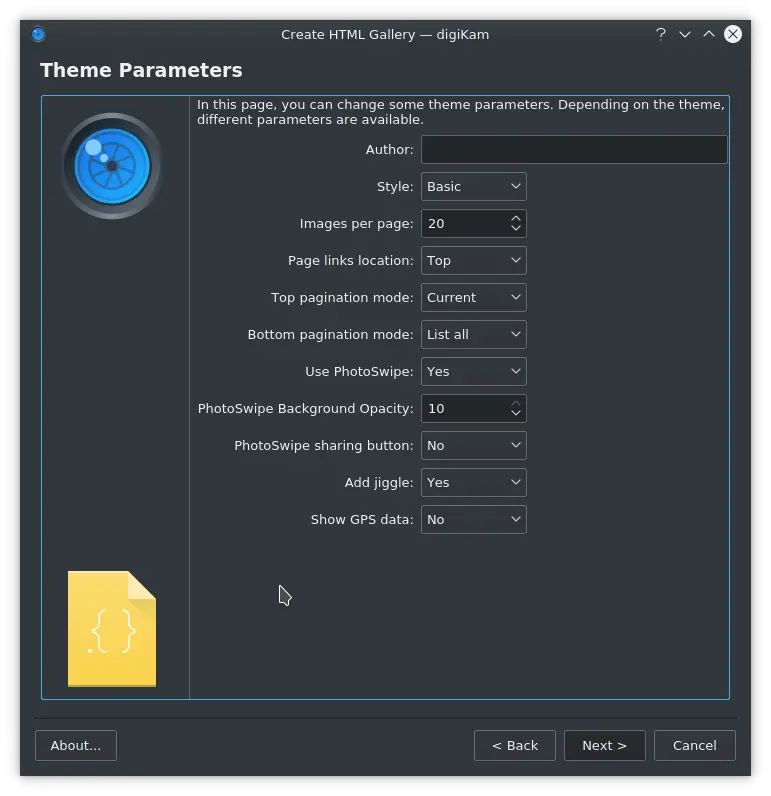

The HTMLGallery plugin introduces a new theme named “Html5Responsive”. This theme allows digiKam to generate a photo gallery which is responsive (and should resize itself to display nicely whether shown on a smartphone screen or a desktop computer monitor) using HTML5 and CSS3. The resulting pages use UTF-8 character encoding so that non-Latin characters can be displayed in photo captions and so on.

This theme comes with a a variety of visual styles which give the resulting gallery pages very different looks as described below.

Basic: a simple style with no frills. “Jiggle” mode has no effect on the Basic style. See the example galleryhere.

Lightbox: this style gives the appearance of photographic slides being viewed on a lightbox, and strips of photographic negative film act as a decorative trim. “Jiggle” mode causes the slides to be rotated a little bit, to give the impression that a busy photographer has slung them down onto the lightbox without time to line them up neatly. See the example gallery here.

Feed: inspired by social media feeds, this style creates a vertical column of image thumbnails with dates and caption text. “Jiggle” currently has no effect on the Feed style. See the example gallery here.

Brown Card: based on old photo albums of brown card, with photo corners and rough-edged photo cards. By default thumbnails are shown in sepia tones, but “Jiggle” mode causes the thumbnails to use a mixture of different levels of sepia and greyscale. See the example gallery here.

Final Words

As you can see, digiKam version 7.0.0 has a lot going for it. The bugzilla entries closed alone for this release are impressive, with more than 750 files closed in one year of development. We have never reached this level before.

We would like to thank all users for your support and donations, and all contributors, students and testers who allowed us to improve and achieve this release.

digiKam 7.0.0 source code tarball, Linux 32/64 bits AppImage bundles, macOS package and Windows 32/64 bits installers can be downloaded from this repository.

We wish you a happy digiKaming this summer…